Background

Media has always been the infrastructure through which societies form shared understanding. From oral traditions to the printing press, from broadcast to the internet, each leap in media technology reshaped how communities cohere, how knowledge spreads, and how collective decisions are made. For most of history, media operated from a single point of view(”SPOV”). Newspapers, broadcast networks, and state media each presented one editorial perspective — coherent and accountable, but gatekept by the few who controlled the press, the airwaves, or the state. The audience consumed; it did not participate. Despite this limitation, SPOV media served a critical function: it gave societies a shared narrative, a common set of facts to argue over, and editorial accountability when that narrative failed. The internet shattered this model. Social media ushered in the era of multiple points of view(”MPOV”) — where anyone could publish, respond, and distribute. This was a genuine democratization of voice, and it brought real benefits: marginalized perspectives found audiences, information moved faster than ever, monopolies on narrative were broken, and citizen journalism exposed truths that gatekeepers had suppressed.

The Problem

But raw MPOV — millions of voices with no filtering mechanism — is just noise. Platforms introduced algorithmic curation initially to organize content. But as volume exploded, curation became editorial power — deciding what gets seen and what doesn't. The algorithm was designed to maximize engagement, not truth. And engagement, as it turns out, favors outrage over nuance, simplification over depth, and emotional manipulation over substance. Unlike public institutions with a mandate to serve society, platforms are private companies that need to monetize — and amid millions of conflicting values, engagement was the most measurable metric available. This isn't a bug — it's the business model. The algorithm serves advertisers, not communities, and what drives ad revenue drives the feed.

The consequences are not abstract. Across democracies, we see declining trust in institutions, erosion of shared facts, and political violence fueled by algorithmically amplified disinformation — from the U.S. Capitol insurrection to the storming of a Seoul courthouse. In the worst cases, social media has contributed to genocide: a UN investigation concluded that Facebook played a "significant role" in the displacement of nearly 900,000 Rohingya.

These are not edge cases. They reveal what happens when engagement is the only metric. MPOV gave everyone a voice — but the algorithm decides which voices get heard. It rewards what captures attention, which pulls people into addictive feedback loops, narrows their information diet into echo chambers, and gradually hardens mild disagreements into tribal hostility. The end result is a media landscape that fragments the very shared reality it was supposed to enable.

How We Got Here

The result is structural, not accidental.

1. [Excess Supply] Content is simply too abundant. AI is accelerating this exponentially — soon, AI agents will generate and distribute content autonomously at a scale no human can keep up with.

2. [Algorithm as Editor] Humans cannot process it all — so algorithms became the editor. Algorithms stepped in to filter the noise on our behalf. They decide what gets surfaced, recommended, and rewarded.

3. [Governance Gap] Corporations and profit maximizers own the platforms — and therefore the algorithm. The most powerful editorial force in modern media is controlled not by the communities it serves, but by shareholders seeking returns, free from democratic oversight.

4. [Ad Dependency] Advertising is the primary revenue source — so the algorithm answers only to advertisers’ needs. This forces platforms to compete for attention at any cost. Advertisers pay for immediate attention, not long-term media value. We are not the customers of these platforms — we are the product, packaged and sold to advertisers.

The result: Users and creators who live with the media have no say in how it operates, and no mechanism to align the algorithm with their own values. Media no longer serves society—we serve it. As AI agents ruthlessly maximize shareholder profit, our subjugation will only deepen.

The Root Cause: Governance → Extractive Behavior

The root cause is governance incentivizing extractive behavior. To recap:

1. Governance: Corporate ownership + advertising as the sole revenue source create an incentive structure that optimizes for attention, not community value.

2. Behavior: This incentive structure is embedded in the algorithm, which enforces it across the entire network — surfacing what captures attention and burying what doesn't.

- Creators — human or AI — produce what the algorithm rewards.

- Audience is consumed, not served.

Under the current incentive structure, the damage extends beyond media — the entire web becomes an attention trap at civilizational scale. AI agents can produce content orders of magnitude faster than humans, and they will follow incentive structures with brute, mechanical precision — no conscience, no fatigue, no hesitation. They will flood the web with whatever captures attention most effectively, regardless of truth, depth, or consequence. At that point, it is worth asking whether a society that cannot form shared understanding through its media can continue to function as a society at all.

We must change the governance to change the behavior. Today's governance serves profit maximizers, centering on attention and engagement. It should center on the values of the people who consume, create, and live with the media. Governance must incentivize every participant to pursue those values. As AI becomes a dominant actor in media, aligning its incentives with human values is not optional. Fail, and we won't just have media that consumes us — we will have AI that directs us.

The Vision: Community-Governed Media

The path forward is straightforward. First, build governance on values that the community defines. Second, design incentives and enforcement that lead every participant to optimize for them.

1. Governance

- Public Governance grounded in Collective Values: Governed by the public, not corporations. Decision-making power belongs to the communities that live with the media, not shareholders seeking profit. This allows platforms to optimize for collectively-defined values—not just engagement and revenue. Wikipedia has become the world’s most authoritative record of collectively agreed-upon knowledge—through public governance and a shared commitment to neutrality, verifiability, and open knowledge.

- Network Value Capture as a Business Model: Media has assets beyond attention: networks of communities with aligned values and the capacity to mobilize them. Beyond advertising revenue, stakeholders can profit from growing network value over time—as the community becomes more cohesive, valuable, and attractive to both existing and potential participants. Like property owners who invest in increasing their asset value, media stakeholders are incentivized to build long-term network value rather than extract short-term attention. Bitcoin is the most successful case—its value derives not from revenue or cash flow, but from the network itself: the more participants who trust and adopt it, the more valuable the network becomes for everyone holding stake in it.

2. Behavior

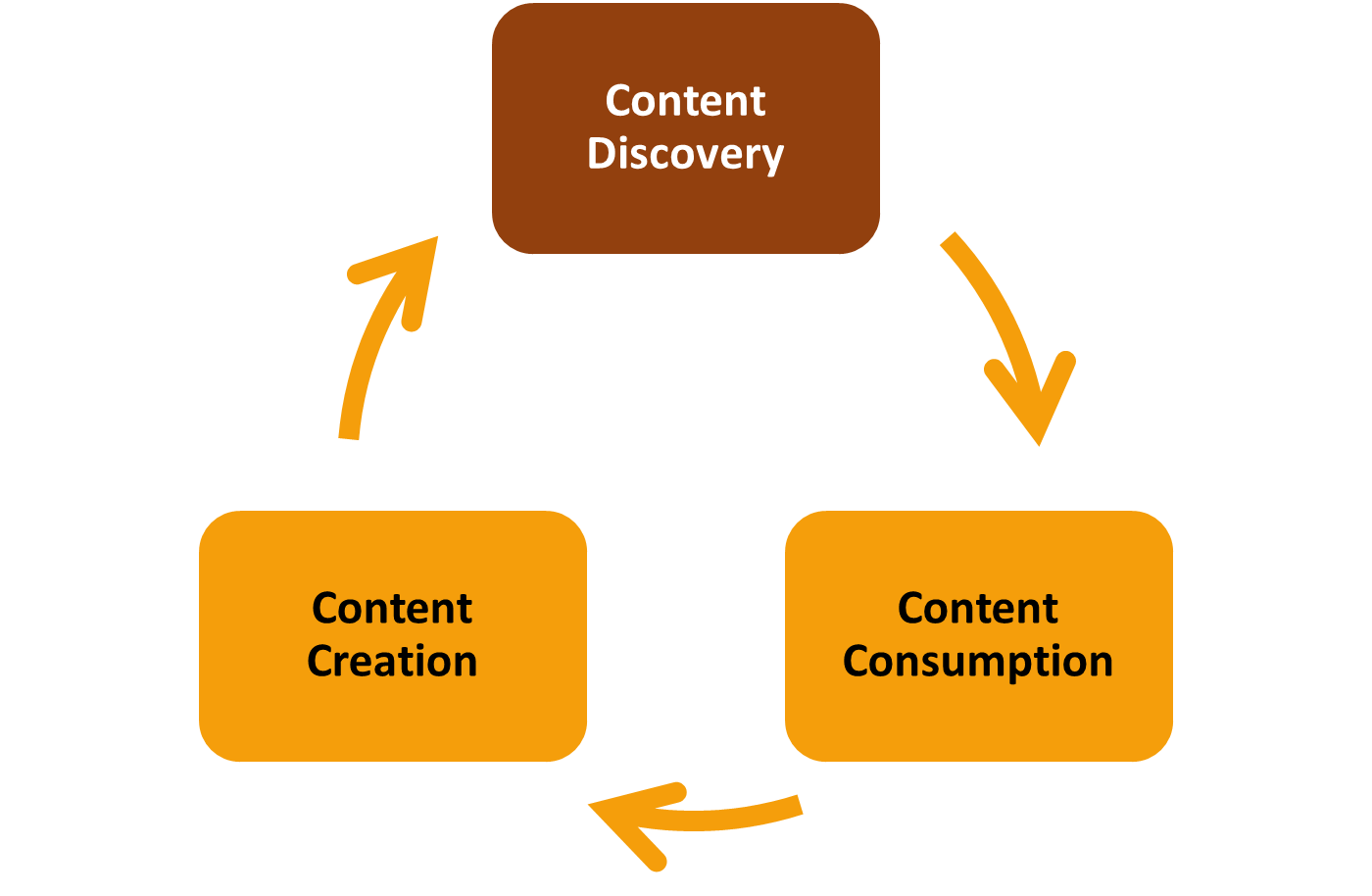

Realigning Content Discovery with Collective Values: In the media cycle, the algorithm governs content discovery — the critical juncture between creation and consumption. But it is more than a neutral intermediary. Whoever governs it shapes the behavior of every actor in the network.

When community values govern the algorithm, and network value aligns stakeholders' long-term interests, the entire media cycle transforms. Creators — human and AI alike — produce content aligned with community values because that is what gets surfaced and rewarded. Distributors promote what the community endorses because their stake grows with the network. Audiences discover what genuinely matters. And AI — rather than exploiting human attention — reinforces the values that communities chose for themselves.

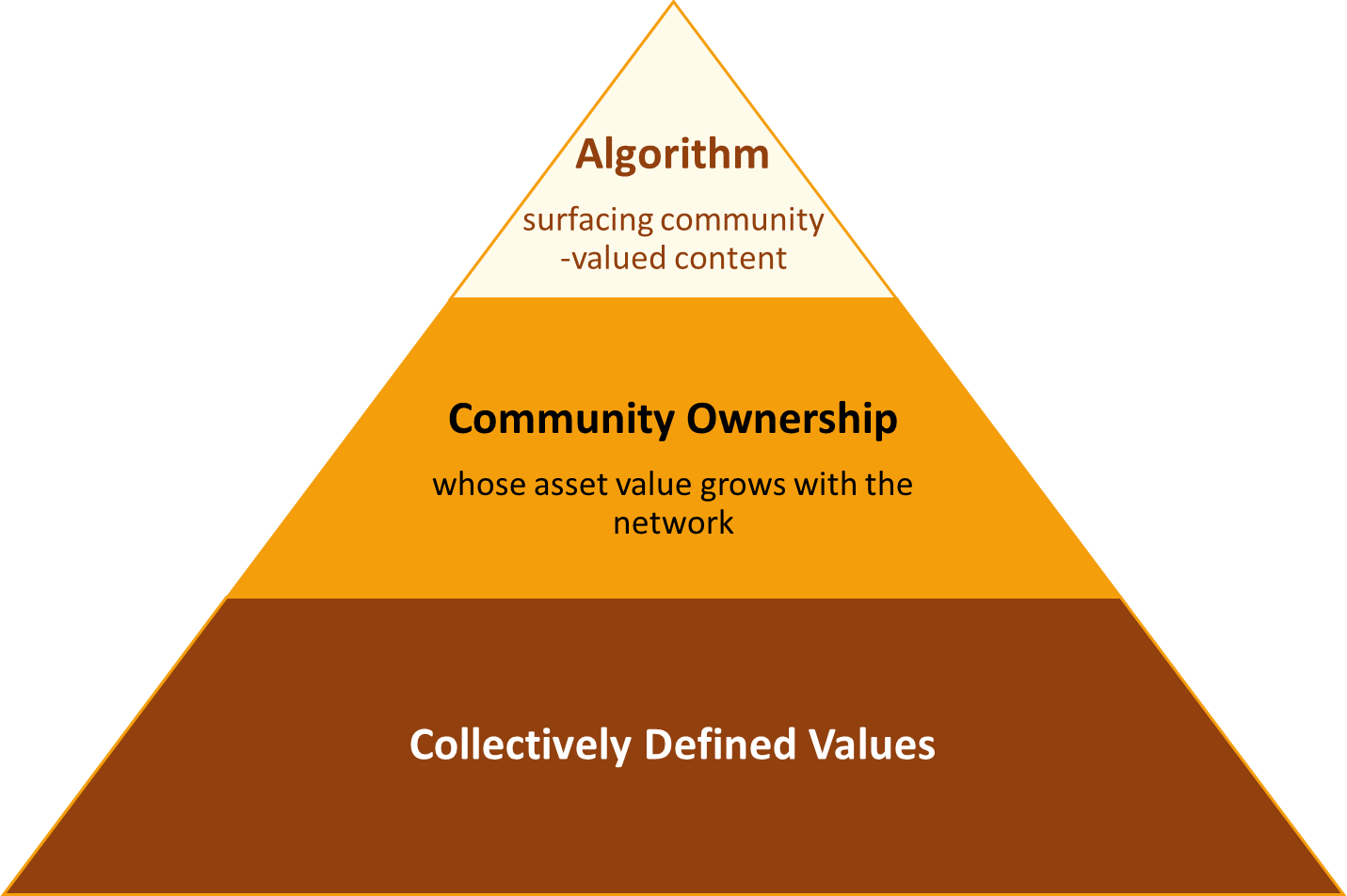

What we need is MPOV media governed by open communities, surfacing valuable content as communities define it. In this media, human values direct AI — not the other way around. The foundation is values, collectively defined. Above it, community ownership — where economic benefits grow by adhering to those values. And on top, an algorithm for content discovery that reinforces both.

Half of the Equation: Sound Tokenomics

Tokenization on blockchain provides the infrastructure for public governance and network value capture. The network is permissionless — anyone can participate. No single entity owns it, and all governance token holders have a say in collective decision-making. Tokens are freely tradable, making it straightforward to capture network value as it grows.

But designing governance that truly embeds community values — and successfully bootstraps real network value — requires careful thought. Fortunately, we have Bitcoin as a north star and failed projects as cautionary tales. The rules of thumb are clear:

A. Define the network's core value and reward its long-term contributors above all else.

Bitcoin's supreme value is ledger integrity. It rewards miners who strengthen that integrity — and nothing else. Long-term contributors who believed in the network's value invested billions in infrastructure to secure its transactions. By contrast, projects that disproportionately rewarded founders, VCs, and early insiders through pre-mines misaligned incentives from the start. Token consolidation and unequal voting power preempted future contributors — creators, moderators, distributors alike — from adding meaningful value. The distribution logic must make the network's highest priority unmistakable.

B. Design token supply to incentivize the contributions needed at each stage.

Bitcoin's initial block reward of 50 BTC over the first four years secured the critical early hashrate. Subsequent halvings created finite supply and scarcity — encouraging participation from those seeking a reliable digital store of value. By contrast, Steemit's inflationary model constantly diluted ownership. Tokens became a currency to spend immediately, not digital real estate worth holding. During the early bootstrapping phase, emission rates and reward structures should be calibrated to attract long-term builders and investors over short-term extractors and speculators — rewarding scarce, high-value contributions when they matter most.

The Missing Piece

The governance through sound tokenomics, in theory, is achievable. But the hard problem remains: linking community-defined values to the incentive mechanism. Values are inherently subjective. In this model, the media network must reward those who create and distribute content that reflects the community's values. Simply rewarding content via quantitative metrics (e.g., upvote count) cannot capture qualitative values — and is easily gamed, as Steemit demonstrated. Blockchain alone cannot achieve qualitative consensus on whether content adheres to community values or is simply spam.

What's needed is an algorithm that bridges governance and reality — one that enforces community values and implements the incentive system in practice. But this presents two fundamental challenges. First, while LLMs have made it technically feasible to evaluate content against subjective criteria, ensuring such evaluation is reliable and scalable remains a hard problem. Second — and more fundamentally — evaluating content against subjective community values cannot rely on a single entity's judgment. It demands consensus from diverse, independent evaluators — which no centralized process can guarantee.

The Other Half: Decentralized AI

A centralized AI editorial mechanism that evaluates content against inherently subjective values is fragile — difficult to ensure the fairness and reliability sufficient to earn community trust. At the same time, no committee — human or algorithmic — can scale to continuously process the diverse content in MPOV media.

Decentralized AI (”dAI”) changes this equation.

Protocols like Bittensor demonstrate how decentralized machine intelligence provides the missing piece. Bittensor enables multiple specialized AI agents to compete on tasks, with their contributions evaluated and rewarded through a novel consensus mechanism called Yuma Consensus. Where Bitcoin solves consensus for deterministic, binary truth, Yuma Consensus enables continuous consensus on subjective evaluations—exactly what's needed to judge content quality against community-defined values.

This architecture unlocks two critical capabilities:

A. Decentralized Consensus on Subjective Values

Yuma Consensus makes it possible to reach distributed agreement on inherently subjective criteria. Communities can define rubrics—what constitutes "scientific rigor," or "journalistic integrity"—and the network can converge on evaluations without central authority. Because the system is decentralized and open, it avoids the black box problem and makes systematic bias difficult to introduce.

B. Reliability & Scalability

The system functions as LLM-as-judge at scale. Multiple competing AI agents cross-validate each other's work, ensuring reliability through redundancy. Unlike human editorial committees, AI evaluation can be made consistent and fast enough to keep pace with web-scale content production. Token-incentivized, permissionless competition among agents makes the system economically sustainable as it scales.

The result: an editorial function that can enforce community values without centralization — a decentralized oracle for incentive and penalty administration.

We named it Caster. Feed it a community's rubric and content, and it produces reliable, scalable, decentralized consensus scores. These scores can partially or wholly power the editorial algorithm that governs content discovery — finally linking community-defined values to the incentive mechanism.

In this future, AI doesn't replace human judgment — it scales it.

How It Works: Community-governed Media Ecosystem

1. Rubric as Community

Rubrics are the foundation — they make community values explicit and actionable. Each community collectively defines its own rubrics: explicit criteria for what constitutes quality content. Rubrics are open-source documents, collaboratively authored and versioned like code repositories. Any member can propose changes; adoption is governed by community vote weighted by stake on blockchain.

For example, a citizen journalism community might define rubrics such as: source attribution (are claims verified?), balance (are multiple perspectives represented?), depth (does it go beyond surface reporting?), and originality (does it add new information?). These rubrics become the constitutional standards against which all content is measured — transparent, evolving, and collectively owned.

Rubrics are not just governance documents — they are the identity of the media itself. The more compelling and universal the values a rubric embodies, the greater the potential of that media community to grow. Rubric owners that define the editorial lens would effectively become media operators, holding significant long-term stake as content distributors. The more responsibly they steward these values, the more the community thrives — and the more valuable their stake becomes.

2. Caster as Editorial Desk

Caster enforces rubrics at scale. When content is submitted, competing AI agents independently score it against the community's rubrics. These subjective evaluations converge into reliable scores without any single entity controlling what rises or falls. Consensus scores determine both visibility and reward eligibility — unifying content moderation and content discovery, traditionally separate functions each controlled by centralized authority, into a single transparent and decentralized mechanism..

Decentralized AI judges may produce more rubric-faithful evaluations than human judges alone. Human evaluation is slow, prone to bias, fatigue, and inconsistency. AI agents competing are economically incentivized to adhere strictly to the rubric — deviation is penalized by the consensus mechanism itself. This solves the core tension of introducing editorial intervention within a decentralized ethos.

The Caster Ecosystem: Diverse Media Communities on Decentralized AI

Those who define and steward rubrics will likely become the community’s leaders — effectively media operators. They will shape the platform's parameters: the weight between engagement and Caster scores in the algorithm, what constitutes fundamental contribution, and how rewards are distributed. These are decisions for each community's governance, and diverse experiments will emerge.

Some communities will prioritize engagement; others will prioritize value adherence. Some will use staking to amplify voices that pursue truth; others will favor lighter participation models that lower the barrier to entry to encourage broader content activity (e.g., passively penalizing spam through token costs only). The design space is wide open.

But if, as history suggests, humans value quality content over clickbait in the longer term, then the media networks of communities that reinforce deeper, more universal values will prevail. The media networks that win will be those that draw out human originality while leveraging AI's consistency to broaden and deepen participation. In an MPOV landscape where diversity of voice is preserved, the media that makes humans more accountable and incentivizes AI to seek truth will be the media that advances humanity.

Call to Action

AI is flooding the web with content no human can verify. Shared narratives are fracturing. Communities are turning on each other, radicalized by algorithms that profit from division. The web we built to connect us is being corrupted — and it will only accelerate.

No platform will fix this. No regulation can keep pace with AI. It falls to us.

We are building Caster Protocol as the decentralized foundation for this new media ecosystem centered on community values. Now we need communities to bring it to life — by defining the values that shape how we communicate, connect, and make sense of the world together. Whether you build on Caster Protocol or bring your community's values to a rubric, every contribution is a step toward reclaiming the web. Every rubric is the seed of a new medium.

If you believe in these principles, join us:

- Media serves people, not advertisers. It should optimize for collective values of the people who live with it — not the profit motives of a few.

- Community governance grounded in collective values. Built on transparent, permissionless infrastructure where all decisions live on-chain.

- Contribution & accountability earns ownership. Long-term contributors earn deeper stake and influence — with real accountability for the values they uphold.

- Humans set the direction, AI serves it. Humans define values and goals. AI scales them faithfully — amplifying human originality, not replacing human judgment.

Media is how society forms shared reality. It should serve the people who live with it. Let's build one that serves us back — owned by its contributors, governed by collective values, and enforced by decentralized arbiters of value.

Let's take back the commons.